Will the data set which was analysed in Python, when tested with spss modeler give the same result?

The data of Wine and its components were given as input to the spss modeler. The process which were done in the python was done in spss modeler.( Scaling,and partition).

The same input conditions were given, keeping the customer segment as the target variable.

Feature scaling :( x- min(x)/ range (x)The choice of the factors was done on based on the results of python 55 % variance.

Partition : 80-20 --> Training to testing

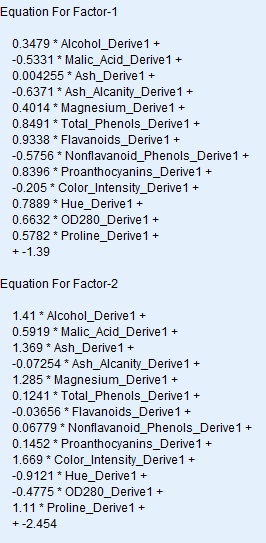

Number of components: 2 ( the factors which were 14 were shrunk to 2)

A filter node is connected to the nugget to filter only the factors and the customer segment to perform the logistic regression.

Finally the analysis node give the results in the form of a confusion matrix.

Let us see in detail.

Here are the results .

The confusion matrix,

So what is to be noted ?

- The variables which were 14 in number were reduced to two factors

- The equation of two factors were given above.

- The logistic regression models gives the results with an accuracy of 97.23 %

- The prediction of spss modeler for the testing set is perfect with a misclassifier of just 1 which is the same as the python.( the previous post)

Post your comments and views.